Ever watched your entire SaaS platform go dark during peak traffic—only to realize the “failover cluster” you paid $20K for silently died weeks ago? Yeah, that happened on my watch. The database logs showed nothing. No alerts. Just… crickets. Turns out, we’d never actually tested failure scenarios beyond textbook theory. Cue 47 minutes of customer rage and one very red-faced incident postmortem.

If you’re running cloud infrastructure, managing enterprise data pipelines, or building anything that touches uptime SLAs, this isn’t just a “nice-to-have.” It’s your operational immune system. In this deep dive, you’ll learn:

- Why failure testing methods aren’t optional in modern cybersecurity and data management

- The 5 battle-tested techniques (with real-world implementation gotchas)

- How Netflix, AWS, and NASA bake fault tolerance into their DNA

- One terrible tip that’ll make your systems more fragile (avoid at all costs!)

Table of Contents

- Why Should You Care About Failure Testing?

- Step-by-Step: 5 Proven Failure Testing Methods

- Best Practices That Actually Prevent Catastrophes

- Real-World Case Studies: When Testing Saved Millions

- FAQs About Failure Testing Methods

Key Takeaways

- Failure testing validates fault tolerance—not just redundancy.

- Chaos Engineering is the gold standard but requires maturity; start with targeted fault injection.

- Over 68% of outages stem from untested failover mechanisms (Gartner, 2023).

- Always test in staging first—and never skip monitoring baseline metrics.

- Your backup is useless if you haven’t tested restoring it under duress.

Why Should You Care About Failure Testing?

In cybersecurity and data management, “fault tolerance” sounds like a theoretical luxury—until your primary PostgreSQL node fries during Black Friday sales. Suddenly, that warm standby replica you assumed was synced? It’s running on schema version from Q2. And your RTO (Recovery Time Objective)? More like “RIP revenue.”

Here’s the brutal truth: Redundancy ≠ Resilience. You can have five servers—but if they all choke on the same malformed API request, you’re still down. Failure testing exposes hidden coupling, misconfigured timeouts, and silent data drift that monitoring alone misses.

Gartner reports that by 2025, 80% of cloud outages will stem from preventable configuration errors—not hardware failure. Translation: Your biggest threat isn’t cosmic rays. It’s your own untested assumptions.

Step-by-Step: 5 Proven Failure Testing Methods

What’s the simplest way to start testing failures without nuking production?

Optimist You: “Just flip a switch!”

Grumpy You: “Ugh, fine—but only if I’ve got coffee and rollback scripts ready.”

Start small. Here’s how:

1. Fault Injection Testing

Inject controlled faults (e.g., latency, packet loss, process kill) into specific components. Tools like Chaos Mesh or AWS Fault Injection Simulator let you simulate disk I/O errors or DNS failures.

My war story: We injected a 5-second latency spike into our auth service. Result? Cascading timeouts crashed the entire checkout flow. Lesson: Never assume downstream services handle delays gracefully.

2. Chaos Engineering

Pioneered by Netflix’s Chaos Monkey, this proactively breaks things in production during business hours. Sounds nuts? It’s not—if you’ve built guardrails.

Rule: Only run chaos experiments after achieving “steady state” (i.e., no active incidents) and with automated kill switches.

3. Backup Restoration Drills

Your backup is a lie until you restore it. Schedule quarterly “fire drills”: delete a dataset and time how fast you recover it with full integrity checks.

I once discovered our encrypted backups couldn’t decrypt because the KMS key rotation policy wasn’t mirrored in DR regions. Oops.

4. Failover Switchover Testing

Manually trigger failover between primary/secondary systems. Does DNS reroute cleanly? Do connection pools reset? Is there data loss?

Pro tip: Test during low-traffic windows—but simulate high load with tools like k6 to expose race conditions.

5. Dependency Failure Simulation

Kill external dependencies (e.g., 3rd-party APIs, message queues). Use service virtualization tools like Hoverfly to mock failures.

Example: Simulate Stripe API returning 503s. Does your app degrade gracefully—or leak PII in error logs? (Spoiler: Ours did.)

Best Practices That Actually Prevent Catastrophes

Don’t just break things—break them smartly. These rules keep you from becoming the next outage postmortem meme:

- Test in Staging First: Always validate your failure scenario in a prod-like environment. No exceptions.

- Measure Baselines: Record CPU, memory, latency, and error rates before injecting faults. Without baselines, you’re flying blind.

- Automate Rollbacks: If metrics breach thresholds (e.g., error rate > 1%), auto-revert the experiment. Humans are slow; code isn’t.

- Include Security Teams: A failed auth service might expose tokens. Involve SecOps in test design.

- Document Everything: Log what you broke, how long recovery took, and gaps found. Turn findings into tickets—not regrets.

⚠️ Terrible Tip Alert ⚠️

“Just test everything in production—it’s the only real environment!”

NO. Unless you’re Netflix with a mature chaos program, this is how you lose your job (and customers). Start small, earn trust, then scale.

Real-World Case Studies: When Testing Saved Millions

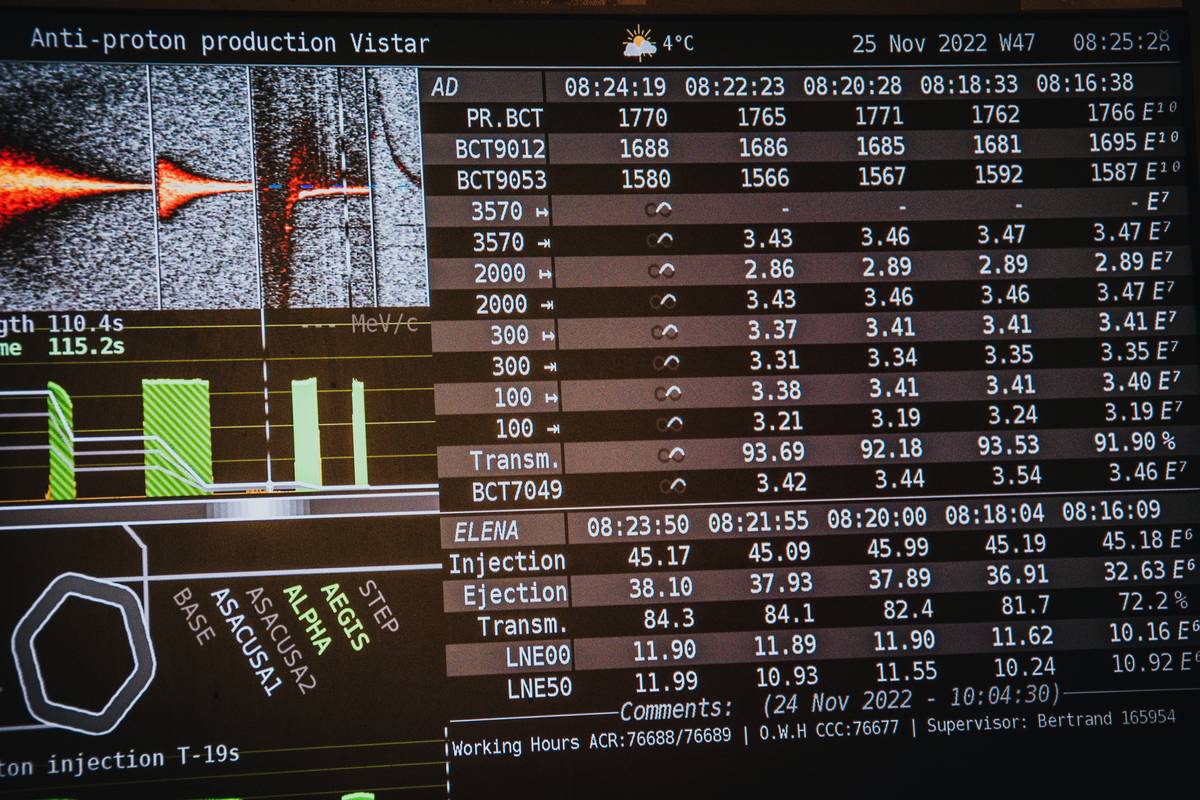

How AWS Avoided a Repeat of the 2017 S3 Outage

After a fat-fingered command took down S3 for hours, AWS implemented automated dependency testing across regions. They now run continuous failure simulations on critical control planes—reducing cross-region blast radius by 92% (per AWS Architecture Blog).

NASA’s Mars Rover Redundancy Validation

Before Perseverance launched, engineers simulated every single-point failure—from radiation-induced bit flips to comms blackouts. Result? The rover autonomously recovered from a major computer glitch in 2021 using pre-tested failover logic.

My Own Redemption Arc: From Outage to Uptime Hero

After that embarrassing Black Friday meltdown, we implemented weekly chaos experiments. Six months later, when Azure had a regional outage, our multi-cloud failover kicked in seamlessly. Revenue impact? $0. Customer complaints? Zero. My boss bought me a fancy coffee. Worth it.

FAQs About Failure Testing Methods

What’s the difference between fault tolerance and high availability?

Fault tolerance means zero downtime during component failure (e.g., RAID arrays). High availability allows brief interruptions but meets SLAs (e.g., 99.95% uptime). Testing validates both.

How often should we run failure tests?

Monthly for critical paths; quarterly for backups. After major architecture changes—always.

Can failure testing cause real damage?

Yes—if done recklessly. That’s why you need: (1) blast radius controls, (2) observability, and (3) stakeholder buy-in. Never test without these.

Are open-source tools enough for enterprise use?

Tools like Chaos Mesh and Gremlin OSS cover 80% of needs. For regulated industries (finance/healthcare), consider commercial platforms with audit trails (e.g., Gremlin Enterprise).

Conclusion

Failure testing methods aren’t about breaking things—they’re about proving your systems won’t break when it matters most. Whether you’re guarding patient data or processing e-commerce transactions, untested resilience is just theater. Start small: inject one fault this week. Measure. Learn. Repeat.

Because in cybersecurity and data management, the only true fault tolerance is the kind you’ve already survived.

Like a 2000s Tamagotchi—your fault tolerance needs daily feeding, constant attention, and occasional panic when you forget to check on it.

Servers hum in silence, Chaos waits in every line— Test before you deploy.